Research

Latest publication:

Excitations and Resonances: Misinterpreted Actions in Neon Meditations. A presentation of Neon Meditations at the sound design seminar at Institute of Musicology in Oslo has been published in a Springer anthology in 2024.

My current research interests have expanded towards visual arts. A book on art theory is in the making.

Self-Organised Sound with Autonomous Instruments

Aesthetics and experiments

This was my PhD project in music technology at the Department of Musicology, University of Oslo in 2008 to 2012.

What are autonomous instruments?

Occasionally one hears of semi-autonomous instruments. These are typically digital instruments that use machine listening techniques to interact with a performer. Remove the realtime interaction and the performer, and an autonomous instrument is what remains.

A more technical and precise term for the kind of instrument this project deals with would be feature extractor feedback systems, or feature-feedback systems for short. They are

- feedback systems containing feature extractors in the loop

- a combination of sound synthesis and algorithmic composition

- but not necessarily interactive in realtime

The basic idea can also be described with the metaphor of a musician playing a note, while constantly listening to the produced sound and adjusting aspects of the playing technique. Here the aim is not to model actual instruments or the way musicians interact with them. Instead, this project is about building autonomous instruments and to explore their musical potential.

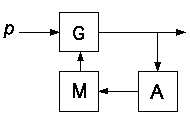

Conceptually, feature-feedback systems can be separated into three units: a signal generator, a feature extractor, and a mapping from the analysed features to the control parameters of the signal generator. Hence there is a constant feedback from the sound generated at any moment to the settings that influence how the sound is generated.

Schematic of autonomous instruments. 'G' represents any signal generator, 'A' is a feature extractor, and 'M' stands for mapping.

In adaptive audio effects (A-DAFx), effect parameters are controlled by features extracted from an input sound. Feature-feedback systems take their own output as a point of departure, hence they may be thought of as self-organising systems.

The feature-feedback systems are studied from the point of view of chaos, nonlinear dynamical systems and complex systems theory. The project as a whole aims towards a better understanding of these synthesis models in terms of relations between parameter spaces and perceptual dimensions.

The word autonomous should be understood roughly as it is used in the context of differential equations. Essentially, it means that the user has no real-time control over the instrument.

PhD Thesis and sound examples

Self-organised Sound with Autonomous Instruments: Aesthetics and experiments.The links to sound examples in this document are unfortunately no longer valid. The sound examples are also available for download as a zip file from the University of Oslo along with the thesis.

Abstract in English and Norwegian.

Publications

Excitations and Resonances: Misinterpreted Actions in Neon Meditations. Book chapter in Sonic Design (Springer, open access), 2024.

Making Complex Music with Simple Algorithms, is it Even Possible? Revista Vortex, v.9, n.2, 2021.

Algorithmic Composition by Autonomous Systems with Multiple Time-Scales, Divergence Press, November 2021. Preprint version available at the arXiv repository.

(The two papers above both came about as an outgrowth of the algorithmic composition project Kolmogorov Variations.)

Smoothness under parameter changes: derivatives and total variation

Poster at the SMC conference in Stockholm, 2013.

The Constant-Q IIR Filterbank Approach to Spectral Flux

Corrected version of a paper presented at the SMC conference in Copenhagen, 2012.

Logistic map with a first order filter

Published in International Journal of Bifurcation and Chaos, Volume 21 Number 6 June 2011 (preprint version).

Self-Organised Sounds with a Tremolo Oscillator

Poster at DAFx-10 in Graz, September 2010.

Feature Extraction for Self-Adaptive Synthesis

Published in SonicIdeas/IdeasSonicas Vol. 1, No 2, pp. 21-28, 2009.

Nonlinear Filters

Proceedings of the ICMC 2007, Vol 1, pp. 283-286. Copenhagen, Denmark.

A review of some common nonlinear filters.

Selected Essays

Automated Composition using Autonomous Instruments. Published in Seismograf, 2014.

Automatisk komposition med autonoma instrument. Lydskrift, 2013.

Swedish version of the above paper.

Lo-Fi Adventures in Obsolete Media

Reflections on the use of lo-fi techniques in some of my compositions.

Other presentations

Synchronisation (mostly about the Kuramoto model).

Feature Extractor Feedback Systems. Brief summary of some ideas developed in the thesis.

Filterbank Flux presented at the SMC conf. 2012 in Copenhagen.

Aspiration Noise. Unpublished poster for some course in 2009.

Frequency Shifting Almost Demystified. Demonstration of single sideband modulation with lots of sound examples.

Synchronization of chaotic modules. Discussion of chaos control, system identification and generative modular patches.

A small but complex world of self-organising oscillators. An autonomous differential equation used for the composition of a short piece.

Variations of FM. Some less well known facts about frequency modulation.

Nonlinear oscillators and FM. More ideas for sound synthesis with chaotic and nonlinear oscillators.

Time domain signal descriptors, ideas and source code.